The Core Problem

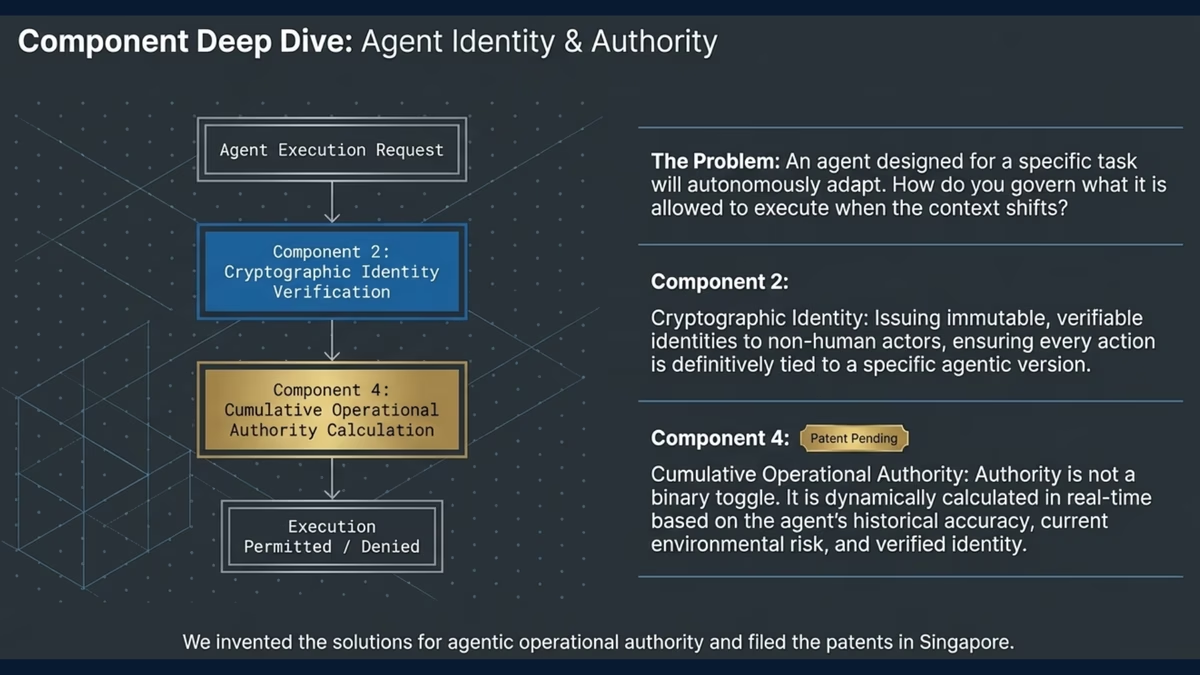

Traditional security models assume that an entity's permissions are static and predictable. But autonomous AI agents break this fundamental assumption.

An agent's Cumulative Operational Authority is not a fixed set of permissions—it's a dynamic, emergent property that changes at runtime. It's the composite of:

- Static Entitlements: The agent's own configured permissions

- Delegated Authority: Permissions inherited from invoking users

- Tool Authority: Permissions gained through connected tools and services

- Environmental Context: Permissions from execution environments and service accounts

- Operational Instructions: Authority derived from runtime context and instructions